Although both of the projects presented here where exquisitely developed and very well articulated the system that we are currently presenting advances in several points. First of all the speech engine that we are using is more advanced and accurate. We have build an interface for it, as seen in the relevant section, that makes it available for other users and purposes too. Furthermore, we are implementing Natural Language Processing techniques in the context of live performance and we are artistically driven by improvisatory storytelling. The platform that we have developed could be used in any performative space as it is speaker and performance independent. Whereas the prior work is looking directly into the transcribed speech, we are now moving one step forward and actually analyse what is being told and implement those sensory data in the overall media choreography that forms the live performance.

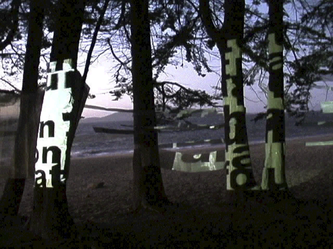

Hubbub

Description

Hubbub is a prior application of the research streams treating speech as a computational substance for architectural construction, complementary to its role as a medium of communication. Hubbub installations may be built into a bench, in a bus stop, a bar, a cafe, a school courtyard, a plaza, a park. As you walk by a Hubbub installation, the words you speak will dance in projection across the surfaces according to the energy and prosody of your voice. For example loud speech produces bold text. As you walk through a Hubbub space, your speech is picked up by microphones and then is partially recognised and converted to text. Associate text is projected onto the walls, furniture and other surfaces around you as animated glyphs whose dancing motion reflects the energy and prosody of your speech.

Intralocutor

Description

Intralocutor is an interactive installation. Intralocutor allows two participants to play with ways of visualising their speech. A real-time video capture is made of the two and projected onto a wall behind them. The video has been altered so that all observers see is the silhouettes of the two. As they begin speaking, the speech of person A becomes visible, moves from person A’s mouth and interacts with person B’s silhouette. Depending on qualities of person A’s speech such as speed, volume, pitch and rhythm, her words might bounce off person's B silhouette, or penetrate it, or simply stick to the ”skin.” The appearance of the words also respond to the speech qualities; for instance, words said with stress appear elongated and shaky; words said with a heightened volume expand; words said with a quickened rhythm come out crowded together.